We've done the following:

- Queries

- All Stories

- Search

- Advanced Search

- Transactions

- Transaction Logs

Advanced Search

Jul 22 2021

Jul 21 2021

Jul 20 2021

Jul 19 2021

Jun 28 2021

The lag on the topics has recovered.

The configuration update of moma will be followed in T3373

The lag has recovered, the search on webapp1[1] is now fully up-to-date and can be tested before changing the configuration on the main webapp.

Jun 23 2021

The metadata indexation is finished, https://webapp1.internal.softwareheritage.org can now search on them via elasticsearch without any issue.

Let's now wait for the lag on origin* topics to recover: https://grafana.softwareheritage.org/goto/iOvBK6gnk?orgId=1

It still remains 1 day to consume the origin*topics.

The metadata were completely ingested so the metadatasearch can be tested on webapp1 after the configuration will be updated to use the new index.

Jun 22 2021

the reindexation should be done by the end of the day

- journal clients started:

root@search1:~/T3398# swh search --config-file journal_client_objects.yml journal-client objects INFO:elasticsearch:POST http://search-esnode4.internal.softwareheritage.org:9200/origin-v0.9.0-write/_bulk [status:200 request:0.013s] INFO:elasticsearch:POST http://search-esnode5.internal.softwareheritage.org:9200/origin-v0.9.0-write/_bulk [status:200 request:0.014s] INFO:elasticsearch:POST http://search-esnode6.internal.softwareheritage.org:9200/origin-v0.9.0-write/_bulk [status:200 request:0.012s] INFO:elasticsearch:POST http://search-esnode4.internal.softwareheritage.org:9200/origin-v0.9.0-write/_bulk [status:200 request:0.012s] ...

root@search1:~/T3398# swh search --config-file journal_client_indexed.yml journal-client objects INFO:elasticsearch:POST http://search-esnode4.internal.softwareheritage.org:9200/origin-v0.9.0-write/_bulk [status:200 request:0.758s] INFO:elasticsearch:POST http://search-esnode5.internal.softwareheritage.org:9200/origin-v0.9.0-write/_bulk [status:200 request:0.023s] INFO:elasticsearch:POST http://search-esnode6.internal.softwareheritage.org:9200/origin-v0.9.0-write/_bulk [status:200 request:0.024s] INFO:elasticsearch:POST http://search-esnode4.internal.softwareheritage.org:9200/origin-v0.9.0-write/_bulk [status:200 request:0.023s] ...

- journal clients configuration prepared:

root@search1:~/T3398# diff -U3 /etc/softwareheritage/search/journal_client_objects.yml journal_client_objects.yml --- /etc/softwareheritage/search/journal_client_objects.yml 2021-06-10 08:08:19.555062808 +0000 +++ journal_client_objects.yml 2021-06-22 09:19:04.841898294 +0000 @@ -8,13 +8,18 @@ port: 9200 - host: search-esnode6.internal.softwareheritage.org port: 9200 + indexes: + origin: + index: origin-v0.9.0 + read_alias: origin-v0.9.0-read + write_alias: origin-v0.9.0-write journal: brokers: - kafka1.internal.softwareheritage.org - kafka2.internal.softwareheritage.org - kafka3.internal.softwareheritage.org - kafka4.internal.softwareheritage.org - group_id: swh.search.journal_client + group_id: swh.search.journal_client-v0.9.0 prefix: swh.journal.objects object_types: - origin

root@search1:~/T3398# diff -U3 /etc/softwareheritage/search/journal_client_indexed.yml journal_client_indexed.yml --- /etc/softwareheritage/search/journal_client_indexed.yml 2021-06-10 09:34:00.980897650 +0000 +++ journal_client_indexed.yml 2021-06-22 09:27:18.507340257 +0000 @@ -8,13 +8,18 @@ port: 9200 - host: search-esnode6.internal.softwareheritage.org port: 9200 + indexes: + origin: + index: origin-v0.9.0 + read_alias: origin-v0.9.0-read + write_alias: origin-v0.9.0-write journal: brokers: - kafka1.internal.softwareheritage.org - kafka2.internal.softwareheritage.org - kafka3.internal.softwareheritage.org - kafka4.internal.softwareheritage.org - group_id: swh.search.journal_client.indexed + group_id: swh.search.journal_client.indexed-v0.9.0 prefix: swh.journal.indexed object_types: - origin_intrinsic_metadata

- new index initialized:

root@search1:~/T3398# diff -U3 /etc/softwareheritage/search/server.yml server.yml --- /etc/softwareheritage/search/server.yml 2021-06-10 08:08:17.819058015 +0000 +++ server.yml 2021-06-22 09:11:16.132518743 +0000 @@ -10,7 +10,7 @@ port: 9200 indexes: origin: - index: origin-production - read_alias: origin-read - write_alias: origin-write + index: origin-v0.9.0 + read_alias: origin-v0.9.0-read + write_alias: origin-v0.9.0-write

On search1:

- puppet disabled

- swh-search / jounal clients stopped

- packages updated:

apt list --upgradable 2>/dev/null | grep python3-swh | cut -f1 -d'/' | xargs apt install -V --dry-run ... The following packages will be upgraded: The following packages will be upgraded: python3-swh.core (0.13.0-1~swh1~bpo10+1 => 0.14.3-1~swh1~bpo10+1) python3-swh.indexer (0.7.0-1~swh1~bpo10+1 => 0.8.0-1~swh1~bpo10+1) python3-swh.indexer.storage (0.7.0-1~swh1~bpo10+1 => 0.8.0-1~swh1~bpo10+1) python3-swh.journal (0.7.1-1~swh1~bpo10+1 => 0.8.0-1~swh1~bpo10+1) python3-swh.model (2.3.0-1~swh1~bpo10+1 => 2.6.1-1~swh1~bpo10+1) python3-swh.objstorage (0.2.2-1~swh1~bpo10+1 => 0.2.3-1~swh1~bpo10+1) python3-swh.scheduler (0.10.0-1~swh1~bpo10+1 => 0.15.0-1~swh1~bpo10+1) python3-swh.search (0.8.0-1~swh1~bpo10+1 => 0.9.0-1~swh1~bpo10+1) python3-swh.storage (0.27.2-1~swh1~bpo10+1 => 0.30.1-1~swh1~bpo10+1) 9 upgraded, 0 newly installed, 0 to remove and 8 not upgraded. ...

- Index intialisation done and swh-search restarted:

root@search1:~# swh search -C /etc/softwareheritage/search/server.yml initialize INFO:elasticsearch:HEAD http://search-esnode6.internal.softwareheritage.org:9200/origin-production [status:200 request:0.025s] INFO:elasticsearch:HEAD http://search-esnode4.internal.softwareheritage.org:9200/origin-read/_alias [status:200 request:0.018s] INFO:elasticsearch:HEAD http://search-esnode5.internal.softwareheritage.org:9200/origin-write/_alias [status:200 request:0.003s] INFO:elasticsearch:PUT http://search-esnode6.internal.softwareheritage.org:9200/origin-production/_mapping [status:200 request:0.102s] Done. root@search1:~# systemctl start gunicorn-swh-search.service

- journal client restarted with no errors on the logs, the search is still working fom the webapp

Jun 21 2021

Jun 18 2021

The backfill is done.

- Puppet is reactivated

- The index configuration set to the default:

root@search0:/etc/softwareheritage/search# export ES_SERVER=192.168.130.80:9200

root@search0:/etc/softwareheritage/search# export INDEX=origin-v0.9.0

root@search0:/etc/softwareheritage/search# cat >/tmp/config.json <<EOF

> {

> "index" : {

> "translog.sync_interval" : null,

> "translog.durability": null,

> "refresh_interval": null

> }

> }

> EOF

root@search0:/etc/softwareheritage/search# curl -s -H "Content-Type: application/json" -XPUT http://${ES_SERVER}/${INDEX}/_settings -d @/tmp/config.json

{"acknowledged":true}The configuration of the index was changed to increase the reindexation speed:

root@search-esnode0:~# export ES_SERVER=192.168.130.80:9200

root@search-esnode0:~# export INDEX=origin-v0.9.0

root@search-esnode0:~# cat >/tmp/config.json <<EOF

> {

> "index" : {

> "translog.sync_interval" : "60s",

> "translog.durability": "async",

> "refresh_interval": "60s"

> }

> }

> EOF

root@search-esnode0:~# curl -s -H "Content-Type: application/json" -XPUT http://${ES_SERVER}/${INDEX}/_settings -d @/tmp/config.jsonThe default settings will be restored when the journal_client will have recovered.

Unerlying index of the aliases changed to use the new index for read and write:

root@search0:~/T3391# curl -XPOST -H 'Content-Type: application/json' http://search-esnode0:9200/_aliases -d '

> {

> "actions" : [

> { "remove" : { "index" : "origin", "alias" : "origin-read" } },

> { "remove" : { "index" : "origin", "alias" : "origin-write" } },

> { "add" : { "index" : "origin-v0.9.0", "alias" : "origin-read" } },

> { "add" : { "index" : "origin-v0.9.0", "alias" : "origin-write" } }

> ]

> }'It seems the swh.journal.indexed.origin_intrinsic_metadata was automatically created so the retention policy was not specifiy (and there is only one partition (!))

% /opt/kafka/bin/kafka-topics.sh --bootstrap-server $SERVER --describe --topic swh.journal.indexed.origin_intrinsic_metadata Topic: swh.journal.indexed.origin_intrinsic_metadata PartitionCount: 1 ReplicationFactor: 1 Configs: max.message.bytes=104857600 Topic: swh.journal.indexed.origin_intrinsic_metadata Partition: 0 Leader: 1 Replicas: 1 Isr: 1

Jun 17 2021

- Temporary configuration created:

- objects:

root@search0:~/T3391# diff -U3 /etc/softwareheritage/search/journal_client_objects.yml journal_client_objects.yml --- /etc/softwareheritage/search/journal_client_objects.yml 2020-12-10 11:04:08.460777825 +0000 +++ journal_client_objects.yml 2021-06-17 16:48:56.006110527 +0000 @@ -4,10 +4,15 @@ hosts: - host: search-esnode0.internal.staging.swh.network port: 9200 + indexes: + origin: + index: origin-v0.9.0 + read_alias: origin-v0.9.0-read + write_alias: origin-v0.9.0-write journal: brokers: - journal0.internal.staging.swh.network - group_id: swh.search.journal_client + group_id: swh.search.journal_client-v0.9.0 prefix: swh.journal.objects object_types: - origin

- indexed:

root@search0:~/T3391# diff -U3 /etc/softwareheritage/search/journal_client_indexed.yml journal_client_indexed.yml --- /etc/softwareheritage/search/journal_client_indexed.yml 2021-02-09 17:48:44.269681575 +0000 +++ journal_client_indexed.yml 2021-06-17 16:49:57.926120227 +0000 @@ -4,10 +4,15 @@ hosts: - host: search-esnode0.internal.staging.swh.network port: 9200 + indexes: + origin: + index: origin-v0.9.0 + read_alias: origin-v0.9.0-read + write_alias: origin-v0.9.0-write journal: brokers: - journal0.internal.staging.swh.network - group_id: swh.search.journal_client.indexed + group_id: swh.search.journal_client.indexed-v0.9.0 prefix: swh.journal.indexed object_types: - origin_intrinsic_metadata

- upgrade packages:

root@search0:~/T3391# apt list --upgradable Listing... Done libnss-systemd/buster-backports 247.3-5~bpo10+1 amd64 [upgradable from: 247.3-3~bpo10+1] libpam-systemd/buster-backports 247.3-5~bpo10+1 amd64 [upgradable from: 247.3-3~bpo10+1] libsystemd0/buster-backports 247.3-5~bpo10+1 amd64 [upgradable from: 247.3-3~bpo10+1] libudev1/buster-backports 247.3-5~bpo10+1 amd64 [upgradable from: 247.3-3~bpo10+1] python3-swh.core/unknown 0.14.3-1~swh1~bpo10+1 all [upgradable from: 0.13.0-1~swh1~bpo10+1] python3-swh.indexer.storage/unknown 0.8.0-1~swh1~bpo10+1 all [upgradable from: 0.7.0-1~swh1~bpo10+1] python3-swh.indexer/unknown 0.8.0-1~swh1~bpo10+1 all [upgradable from: 0.7.0-1~swh1~bpo10+1] python3-swh.model/unknown 2.6.1-1~swh1~bpo10+1 all [upgradable from: 2.3.0-1~swh1~bpo10+1] python3-swh.objstorage/unknown 0.2.3-1~swh1~bpo10+1 all [upgradable from: 0.2.2-1~swh1~bpo10+1] python3-swh.scheduler/unknown 0.15.0-1~swh1~bpo10+1 all [upgradable from: 0.10.0-1~swh1~bpo10+1] python3-swh.search/unknown 0.9.0-1~swh1~bpo10+1 all [upgradable from: 0.8.0-1~swh1~bpo10+1] python3-swh.storage/unknown 0.30.1-1~swh1~bpo10+1 all [upgradable from: 0.27.2-1~swh1~bpo10+1] systemd-sysv/buster-backports 247.3-5~bpo10+1 amd64 [upgradable from: 247.3-3~bpo10+1] systemd-timesyncd/buster-backports 247.3-5~bpo10+1 amd64 [upgradable from: 247.3-3~bpo10+1] systemd/buster-backports 247.3-5~bpo10+1 amd64 [upgradable from: 247.3-3~bpo10+1] udev/buster-backports 247.3-5~bpo10+1 amd64 [upgradable from: 247.3-3~bpo10+1]

- Initialize the new index and aliases:

root@search0:~/T3391# swh search --config-file journal_client_objects.yml initialize INFO:elasticsearch:PUT http://search-esnode0.internal.staging.swh.network:9200/origin-v0.9.0 [status:200 request:5.373s] INFO:elasticsearch:PUT http://search-esnode0.internal.staging.swh.network:9200/origin-v0.9.0/_alias/origin-v0.9.0-read [status:200 request:0.052s] INFO:elasticsearch:PUT http://search-esnode0.internal.staging.swh.network:9200/origin-v0.9.0/_alias/origin-v0.9.0-write [status:200 request:0.038s] INFO:elasticsearch:PUT http://search-esnode0.internal.staging.swh.network:9200/origin-v0.9.0/_mapping [status:200 request:0.086s] Done.

- starting the origin* journal client:

root@search0:~/T3391# swh search --config-file journal_client_objects.yml journal-client objects INFO:elasticsearch:POST http://search-esnode0.internal.staging.swh.network:9200/origin-v0.9.0-write/_bulk [status:200 request:0.661s] INFO:elasticsearch:POST http://search-esnode0.internal.staging.swh.network:9200/origin-v0.9.0-write/_bulk [status:200 request:1.671s] INFO:elasticsearch:POST http://search-esnode0.internal.staging.swh.network:9200/origin-v0.9.0-write/_bulk [status:200 request:2.047s] INFO:elasticsearch:POST http://search-esnode0.internal.staging.swh.network:9200/origin-v0.9.0-write/_bulk [status:200 request:0.179s]

- starting the indexed metadata journal client:

root@search0:~/T3391# swh search --config-file journal_client_indexed.yml journal-client objects

- Index status:

root@search0:~/T3391# curl -s http://search-esnode0:9200/_cat/indices\?v health status index uuid pri rep docs.count docs.deleted store.size pri.store.size green open origin HthJj42xT5uO7w3Aoxzppw 80 0 1320217 166798 1.7gb 1.7gb green open origin-v0.9.0 o7FiYJWnTkOViKiAdCXCuA 80 0 263556 400 214.8mb 214.8mb green close origin-backup-20210209-1736 P1CKjXW0QiWM5zlzX46-fg 80 0 green close origin-v0.5.0 SGplSaqPR_O9cPYU4ZsmdQ 80 0

The origin_visits* topics have not started to be ingested, so the new fields are not yes present on the index.

The fix is released in version 'v0.9.0'.

I will deploy it on staging and launch a complete reindexation of the metadata (+origin but needed by other changes on this release)

Jun 16 2021

Build is green

Thanks, the diff is updated according to your reviews:

- rename the test to something more generic

- add searches on the boolean field

Jun 15 2021

You should rename the test, it doesn't just test the date anymore

Build is green

rebase

Jun 11 2021

This is the mappings of the staging and production environments.

As expected, the production mapping has more fields than the staging one.

- The old servers are stopped and the VMs removed from proxmox

- nodes removed from puppet inventory

- Inventory site is updated

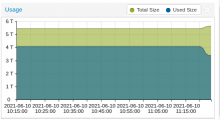

- Journal client for indexed metadata deployed, the backfill is done (in a couple of hours): https://grafana.softwareheritage.org/goto/ndjfw66Gz

- The metadata search via ES is activated on https://webapp1.internal.softwareheritage.org/

Jun 10 2021

- configuration of the swh-search and journal clients services deployed

- Old node decommissionning on the cluster:

export ES_NODE=192.168.100.86:9200

curl -H "Content-Type: application/json" -XPUT http://${ES_NODE}/_cluster/settings\?pretty -d '{

"transient" : {

"cluster.routing.allocation.exclude._ip" : "192.168.100.81,192.168.100.82,192.168.100.83"

}

}'

{

"acknowledged" : true,

"persistent" : { },

"transient" : {

"cluster" : {

"routing" : {

"allocation" : {

"exclude" : {

"_ip" : "192.168.100.81,192.168.100.82,192.168.100.83"

}

}

}

}

}

}The shards start to be gently moved from the old servers:

curl -s http://search-esnode4:9200/_cat/allocation\?s\=host\&v 10:22:58

shards disk.indices disk.used disk.avail disk.total disk.percent host ip node

27 38.7gb 38.8gb 153.7gb 192.6gb 20 192.168.100.81 192.168.100.81 search-esnode1

27 37.7gb 37.8gb 154.8gb 192.6gb 19 192.168.100.82 192.168.100.82 search-esnode2

22 30.5gb 30.6gb 162gb 192.6gb 15 192.168.100.83 192.168.100.83 search-esnode3

35 50gb 50.1gb 6.6tb 6.7tb 0 192.168.100.86 192.168.100.86 search-esnode4

35 50gb 50.2gb 6.6tb 6.7tb 0 192.168.100.87 192.168.100.87 search-esnode5

34 49.4gb 49.5gb 6.6tb 6.7tb 0 192.168.100.88 192.168.100.88 search-esnode6When they will be no shards on the old servers, we will be able to stop them and remove them from the proxmox server.

Jun 9 2021

And all the new nodes are now in the production cluster:

curl -s http://search\-esnode4:9200/_cat/allocation\?s\=host\&v 35m 9s 18:47:23

shards disk.indices disk.used disk.avail disk.total disk.percent host ip node

30 42.7gb 42.9gb 149.7gb 192.6gb 22 192.168.100.81 192.168.100.81 search-esnode1

30 41.4gb 41.6gb 151gb 192.6gb 21 192.168.100.82 192.168.100.82 search-esnode2

30 41.7gb 41.8gb 150.8gb 192.6gb 21 192.168.100.83 192.168.100.83 search-esnode3

30 41.9gb 42gb 6.6tb 6.7tb 0 192.168.100.86 192.168.100.86 search-esnode4

30 41.8gb 41.9gb 6.6tb 6.7tb 0 192.168.100.87 192.168.100.87 search-esnode5

30 41.2gb 41.3gb 6.6tb 6.7tb 0 192.168.100.88 192.168.100.88 search-esnode6The next step will be to switch the swh-search configurations to use the new nodes and progressively remove the old nodes from the cluster.

- zfs installation:

root@search-esnode4:~# apt update && apt install linux-image-amd64 linux-headers-amd64 root@search-esnode4:~# shutdown -r now # to apply the kernel root@search-esnode4:~# apt install libnvpair1linux libuutil1linux libzfs2linux libzpool2linux zfs-dkms zfsutils-linux zfs-zed

- refresh with the last packages installed from backports

root@search-esnode4:~# apt dist-upgrade # trigger a udev upgrade which leads to a network interface renaming root@search-esnode4:~# sed -i 's/ens1/enp2s0/g' /etc/network/interfaces

- pre zfs configuration actions:

root@search-esnode4:~# puppet agent --disable root@search-esnode4:~# systemctl disable elasticsearch root@search-esnode4:~# systemctl stop elasticsearch root@search-esnode4:~# rm -rf /srv/elasticsearch/nodes

- To manage the disks via zfs, the raid card needed to be configured in enhanced HBA mode in the idrac

- after a rebbot, the disks are well detected by the system:

root@search-esnode4:~# ls -al /dev/sd* brw-rw---- 1 root disk 8, 0 Jun 9 04:54 /dev/sda brw-rw---- 1 root disk 8, 16 Jun 9 04:54 /dev/sdb brw-rw---- 1 root disk 8, 32 Jun 9 04:54 /dev/sdc brw-rw---- 1 root disk 8, 48 Jun 9 04:54 /dev/sdd brw-rw---- 1 root disk 8, 64 Jun 9 04:54 /dev/sde brw-rw---- 1 root disk 8, 80 Jun 9 04:54 /dev/sdf brw-rw---- 1 root disk 8, 96 Jun 9 04:54 /dev/sdg brw-rw---- 1 root disk 8, 97 Jun 9 04:54 /dev/sdg1 brw-rw---- 1 root disk 8, 98 Jun 9 04:54 /dev/sdg2 brw-rw---- 1 root disk 8, 99 Jun 9 04:54 /dev/sdg3

root@search-esnode4:~# smartctl -a /dev/sda smartctl 6.6 2017-11-05 r4594 [x86_64-linux-4.19.0-16-amd64] (local build) Copyright (C) 2002-17, Bruce Allen, Christian Franke, www.smartmontools.org

Jun 8 2021

We did the following for the 3 servers one at a time (the first one was used for papercuts ;):

May 25 2021

The servers should be installed on the rack the 26th May. The network configuration will follow the same day or next day.

They will be installed as it by the "DSI" so we will have to install the system via the iDRAC when they will be reachable.

May 3 2021

The command seems to be delivered, I will check with the DSI how we can proceed for the installation

Apr 23 2021

According to the tracking page, the command has left the factory the Apr 22, 2021, The ETA is May 28, 2021*.

Apr 20 2021

The order was received and confirmed by dell ETA: 28th may

The detail was sent on the sysadm mailing list

Apparently the order was lost somewhere after it was sent to dell the 6th april 🤔

It was reissued yesterday...

Apr 9 2021

Apr 8 2021

And this is now available in production.

This has now been deployed and tested in staging with a canary origin (github.com/olasd/Pythagore). Time to deploy in production.

Apr 1 2021

Mar 31 2021

After talking with @rdicosmo, we finally chose to replace on each server the 4 HDD 2.4To by 6 SSD 1.9To to be sure we will have good performances and enought space for the future.

The quote wil nowl be sent to the purchasing service according to the usual procedure [1]

Mar 30 2021

Final quotation sent for approval.

The details are:

3 PowerEdge R6515 (1u) with per server:

- 10 disks enclosure

- BOSS controller with 2 240Go cards (for system)

- 4 SAS 2.5" 10k 2.4To disks

- SFP+ network card

- 2 SFP cables

- 2 power supplies with their cables

- IDRac enterprise

- Rack mount rails with cable management

Mar 25 2021

Mar 13 2021

Hi @zack, we can consider using Elasticsearch string query format to achieve this feature without having to design the syntax from scratch. It is really powerful and can cover most of the use cases.

Mar 10 2021

Mar 8 2021

Mar 5 2021

Thanks for the feedback

Sharing resources with the existing esnodes is a non-starter IMO; we probably want SSD storage for this. Plus they're at (physical) capacity.

I forgot one step, cleaning the previous alias origin -> origin_production not needed anymore:

vsellier@search-esnode1 ~ % curl -s http://$ES_SERVER/_cat/indices\?v && echo && curl -s http://$ES_SERVER/_cat/aliases\?v && echo && curl -s http://$ES_SERVER/_cat/health\?v health status index uuid pri rep docs.count docs.deleted store.size pri.store.size green open origin-production hZfuv0lVRImjOjO_rYgDzg 90 1 153130652 26701625 273.4gb 137.3gb

awesome

The new configuration is deployed, swh-search is now using the alias which should help for the future upgrades

Deployment in production:

- puppet stopped

- configuration updated to declare the index, it needs to be done to make swh-search initializing the aliaes before the journal clients starts (not guaranteed with a puppet apply)

- package updated

- gunicorn-swh-search service restarted:

Mar 05 09:08:46 search1 python3[1881743]: 2021-03-05 09:08:46 [1881743] gunicorn.error:INFO Starting gunicorn 19.9.0 Mar 05 09:08:46 search1 python3[1881743]: 2021-03-05 09:08:46 [1881743] gunicorn.error:INFO Listening at: unix:/run/gunicorn/swh-search/gunicorn.sock (1881743) Mar 05 09:08:46 search1 python3[1881743]: 2021-03-05 09:08:46 [1881743] gunicorn.error:INFO Using worker: sync Mar 05 09:08:46 search1 python3[1881748]: 2021-03-05 09:08:46 [1881748] gunicorn.error:INFO Booting worker with pid: 1881748 Mar 05 09:08:46 search1 python3[1881749]: 2021-03-05 09:08:46 [1881749] gunicorn.error:INFO Booting worker with pid: 1881749 Mar 05 09:08:46 search1 python3[1881750]: 2021-03-05 09:08:46 [1881750] gunicorn.error:INFO Booting worker with pid: 1881750 Mar 05 09:08:46 search1 python3[1881751]: 2021-03-05 09:08:46 [1881751] gunicorn.error:INFO Booting worker with pid: 1881751 Mar 05 09:08:53 search1 python3[1881750]: 2021-03-05 09:08:53 [1881750] swh.search.api.server:INFO Initializing indexes with configuration: Mar 05 09:08:53 search1 python3[1881750]: 2021-03-05 09:08:53 [1881750] elasticsearch:INFO HEAD http://search-esnode2.internal.softwareheritage.org:9200/origin-production [status:200 request:0.023s] Mar 05 09:08:54 search1 python3[1881750]: 2021-03-05 09:08:54 [1881750] elasticsearch:INFO PUT http://search-esnode1.internal.softwareheritage.org:9200/origin-production/_alias/origin-read [status:200 request:0.487s] Mar 05 09:08:54 search1 python3[1881750]: 2021-03-05 09:08:54 [1881750] elasticsearch:INFO PUT http://search-esnode3.internal.softwareheritage.org:9200/origin-production/_alias/origin-write [status:200 request:0.152s] Mar 05 09:08:54 search1 python3[1881750]: 2021-03-05 09:08:54 [1881750] elasticsearch:INFO PUT http://search-esnode1.internal.softwareheritage.org:9200/origin-production/_mapping [status:200 request:0.009s]

vsellier@search-esnode1 ~ % curl -s http://$ES_SERVER/_cat/indices\?v && echo && curl -s http://$ES_SERVER/_cat/aliases\?v && echo && curl -s http://$ES_SERVER/_cat/health\?v health status index uuid pri rep docs.count docs.deleted store.size pri.store.size green open origin-production hZfuv0lVRImjOjO_rYgDzg 90 1 153097672 144224208 288.1gb 149gb